Starmind Pico

Is it possible to train a Language Model to run on the RP2040? Yes. Dumb but fast.

Background

I had a TinyPico (RP2040) laying around the office for the last 8 months. During a recent hackathon, I decided to whip it out for a weekend project and thought to myself — I bet I can make a Language Model run on this.

What I set out to do:

- Find a Language Model architecture that runs well on RP2040

- Train a model

- Run the model

Architecture Analysis

I analyzed 5 factors of the Language Model Architecture and how they affect model inference speed and quality:

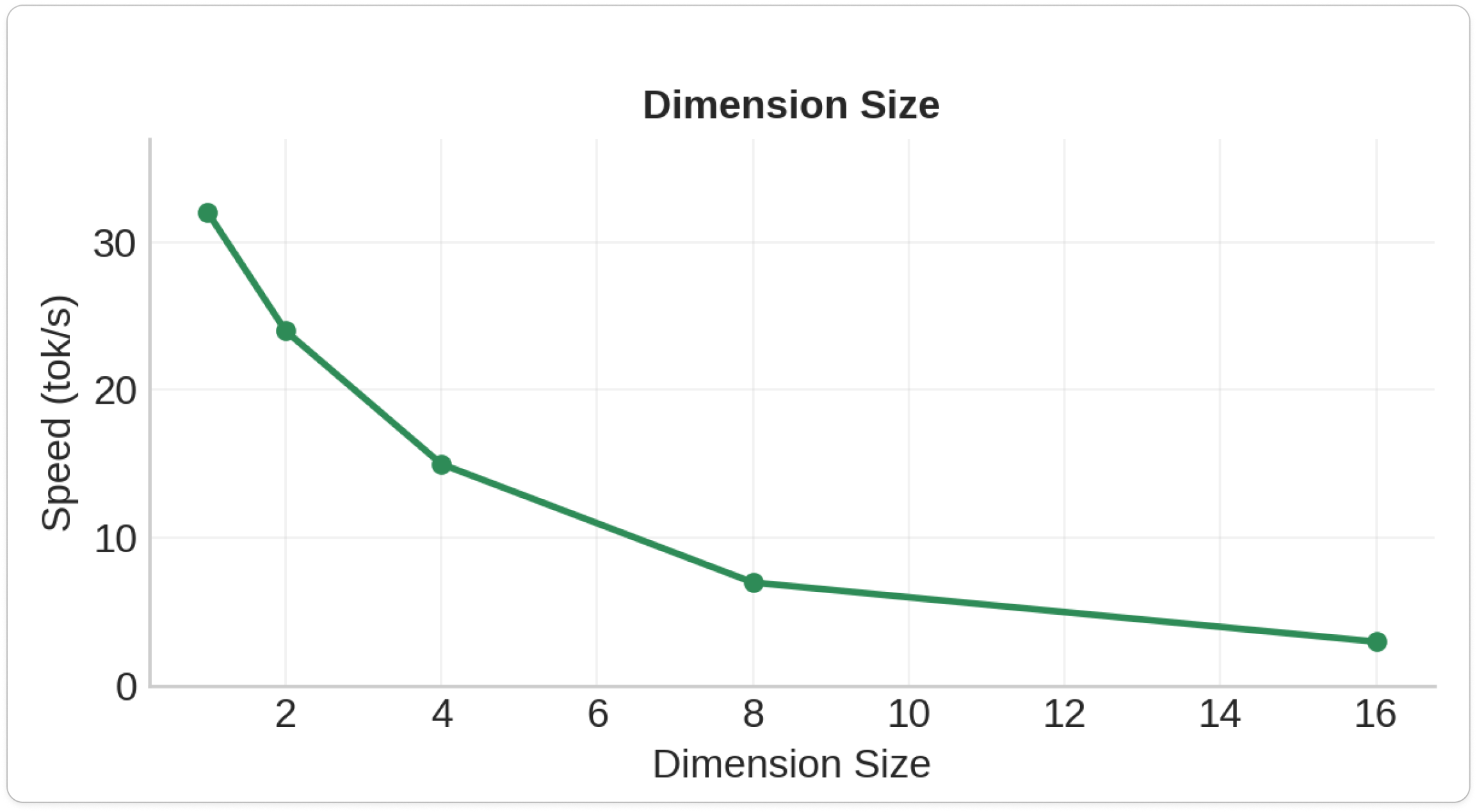

1. Dimension Size

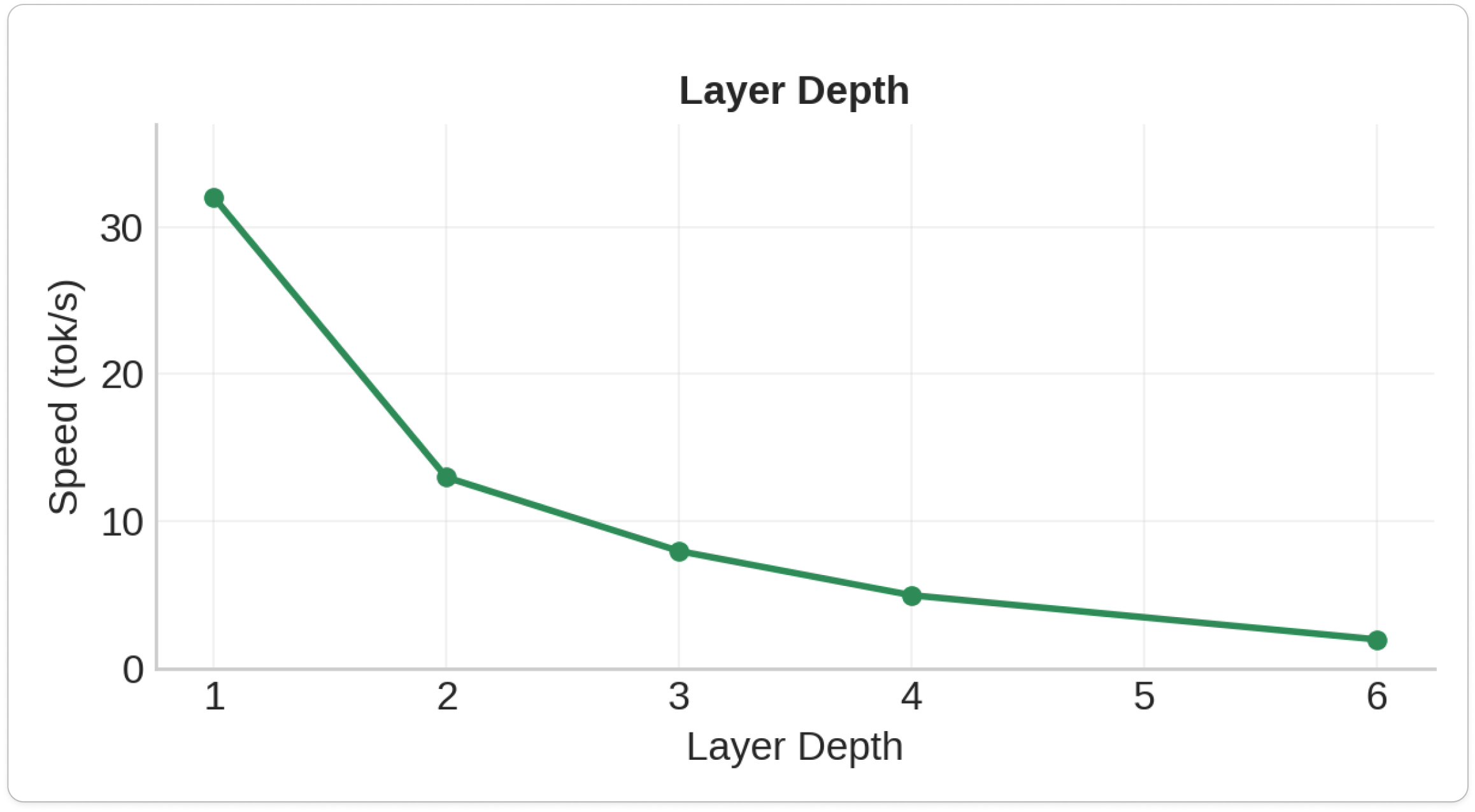

2. Layer Depth

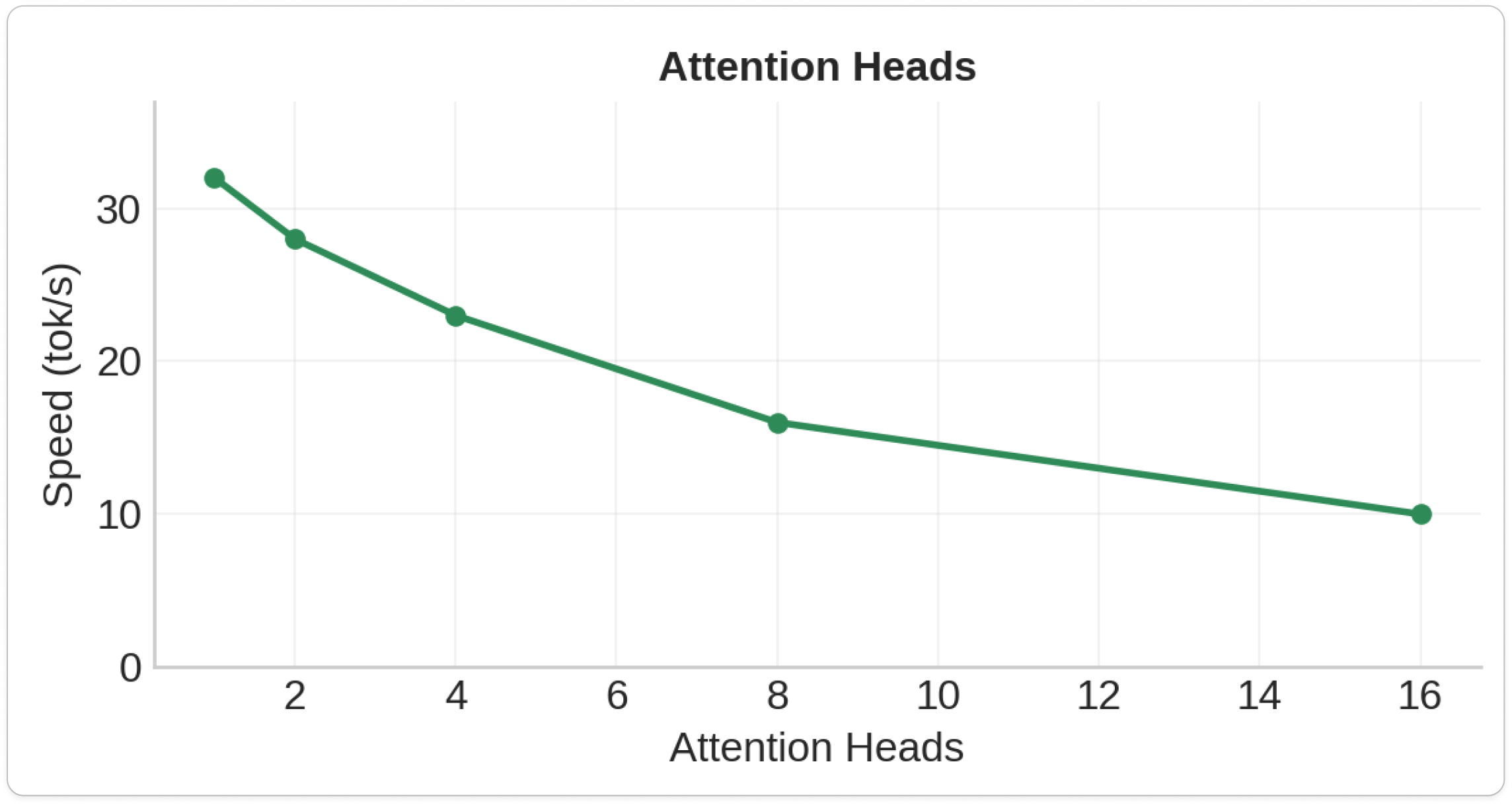

3. Attention Heads

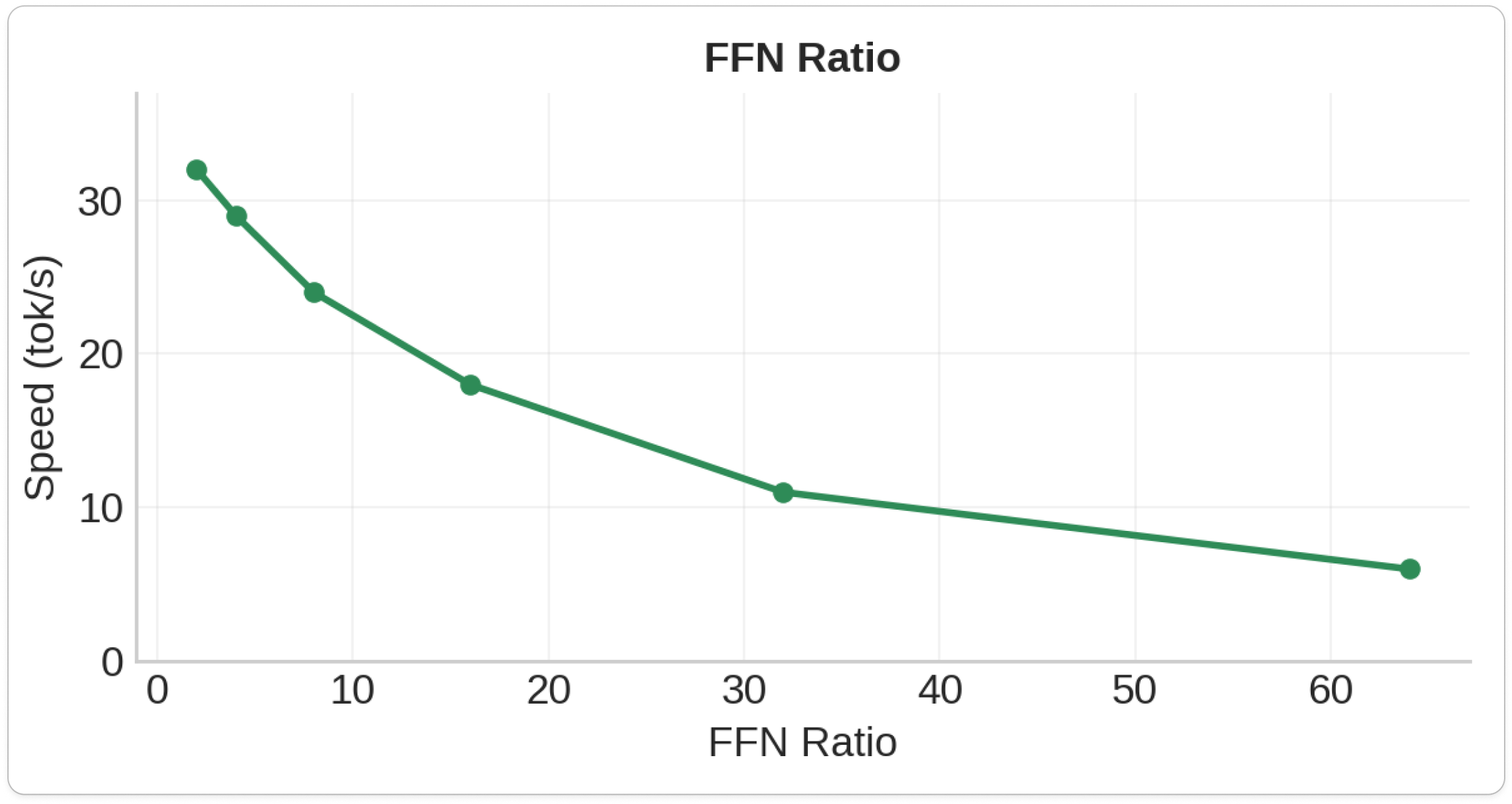

4. FFN Ratio

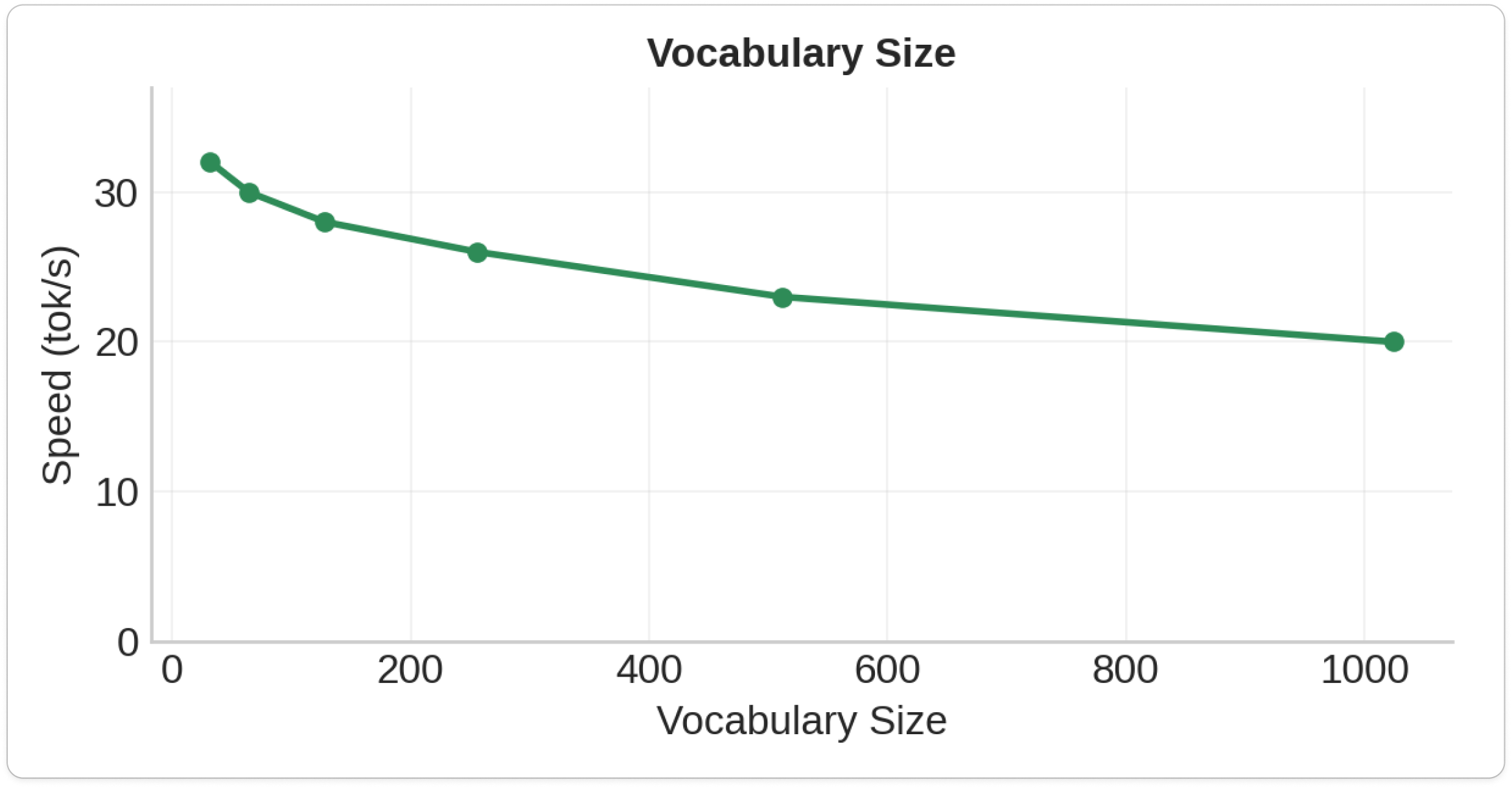

5. Vocabulary Size

176 architectural variants tested — the most comprehensive microcontroller transformer study ever.

Key Findings

Speed Results

- Fastest model: 32.0 tokens/second (1D architecture)

- Most balanced: 2-3 tokens/second (8K parameters)

- Memory limit: 256 vocabulary tokens maximum due to SRAM fragmentation

Microcontroller-Specific Insights

- Quantization paradox: Saves memory but slows down inference due to de-quantization overhead

- RP2040 bottleneck: Computation speed, not memory bandwidth

- KV caching: 3-7x speed improvement for multi-token generation

# Maximum Speed (1K params)

optimal_speed = {

'vocab_size': '32-64',

'dim': '1-2',

'hidden_dim': '64-128',

'n_layers': 1,

'n_heads': '6-8',

'expected_speed': '20-32 tok/s'

}

# Balanced Production (8K params)

balanced_production = {

'vocab_size': '256-512',

'dim': '6-8',

'hidden_dim': '192-256',

'n_layers': '2-3',

'n_heads': 8,

'expected_speed': '2-5 tok/s'

}

Training

Trained models on an H100 rented on Prime Intellect. Each model takes ~2 minutes to train. All initial training took 12 hours (about $20 total).

Conclusion

Due to memory fragmentation on RP2040, the maximum vocabulary size is 256. After spending $20+ in GPU for pre-training, the models generate a few coherent words, which is promising. People on Reddit liked it.